By 2022, 90% of all new apps will be built using a microservices design

– IDC

Microservices architecture, which represents a fundamental change in the current perspective on software development, is one of the most significant developments in the software industry. And the above data by IDC justifies the transition we have witnessed from monolithic to microservices design.

The intriguing shift from monolithic to microservices in business application development, the key reasons behind the shift, and why adopting microservices at scale marks the beginning of a technological change from the conventional Enterprise Content Management to Content Services Platform—read this piece to dive deep into this and more.

Understanding Monolith

A single, self-contained application with a close relationship between the persistence and service levels and the display layer. This is an example of what we refer to as a monolithic design, where even little changes to any component of the software necessitate deploying the entire application.

Monolithic Architecture

Why is Monolith Not an Appropriate Architecture for Cloud?

Let’s look at why monoliths act as roadblocks when it comes to flexibility, scalability, and organizations’ need for systems that can be adjusted in response to new business requirements.

There is no way to limit error isolation

Monoliths are intricately interwoven and have sizable spheres. As a result, functionality frequently affects a number of parts of the system and inevitably has unfavorable consequences. Monolithic systems consist of several components, but they are all produced and supplied as a single entity; hence there is no differentiation between them. Additionally, there is no assurance that just a new release will have an effect there.

Scalability is painful

It might be challenging to grow and expand the components of a monolithic application, as it consumes a lot of resources. In most circumstances, scaling a monolithic application requires implementing many instances of the whole system, which causes an unnecessary overhead of system memory and processing resources.

Tiresome and time-consuming deployments

You must rebuild the entire monolithic application and redeploy the entire monolith to the cloud even when just a minor update is required, or new functionality must be added to a single component. As a result, monolithic program updates take time and interfere with rapid release cycles.

Lack of flexibility

Because everything inside a monolith is closely connected, extending the system to use multiple tools and tech stacks is difficult. The monolithic design also makes it difficult to choose better or new technologies.

Enterprise applications’ continued success depends on continuous experimentation, user feedback, and delivery of new features. This is a major driving force for us at Newgen Software to switch from monolithic architectures to microservices.

What is the best course of action then?

Cloud-optimized Architecture

All conventional application rules are broken by cloud-native apps. They can readily scale up and down as workloads vary and bypass failures while providing restoration to stop failure. Reusing components makes deployments and development faster and more effective. However, just switching to the cloud does not always bring about any of these benefits.

We made the decision to modify the architecture and model of our application to fully benefit from the cloud.

Let’s begin by defining what a microservice is.

What is Microservice Architecture?

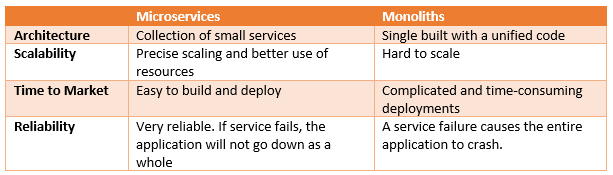

Microservices is a software development technique that divides applications into loosely coupled services. Microservices use a modular approach to constructing applications as opposed to merging everything into one platform, which is a stark contrast to the monolithic manner.

By dividing large systems into smaller services with well-defined, restricted APIs, the coupling may be decreased while making it easier to build functionality using generally accepted design concepts like the separation of concerns and the single responsibility principle.

To reduce the system complexity, large systems are divided into a number of distinct services, each having a separate set of business rules and a data store. Microservice-based applications may scale autonomously, which sets them apart from conventional monolithic applications.

Microservice-based applications may scale autonomously, which sets them apart from conventional monolithic applications.

The motivation behind shifting to microservices is:

- A move from on-premises infrastructure to the cloud

- The transformation of virtual machines into containers

- A greater developer friendliness of open-source tools and cloud-native services

Case in Point: Newgen’s Move from Monolith to Microservices Architecture

Let’s go through a concrete illustration of how we at Newgen Software took a monolithic system and decomposed it into a cloud-optimized microservices-based system. Given the issues with monolithic systems and the benefits of a microservices design, let’s move on to the journey itself.

Identifying Business Use Cases

Before beginning with the approach finalization, the following business use cases of the content service platform were identified:

- Using the back-end for storing high-velocity documents and messages

- Using the back-end for storing and managing large video storage

- Using the platform as a video KYC back-end to store and manage the content

The Starting Point

The engineering team examined the functionalities of the conventional monolithic ECM and identified the specific services that needed to be made accessible.

To address critical business needs – it was decided to build a multi-tenant SaaS-based, cloud-native content services platform that would consist of a number of microservices like –

- User Authentication

- User Management

- Roles Management

- Folder and Content

- Elastic Search

In this example, let’s consider the Content Service, which deals with the documents present in the content repository.

The ongoing requirement to scale up and scale down the service for accessing specific documents, along with their content and metadata, during the high-velocity concurrent transactions was an essential component of our transition. Apart from this, it was necessary to have a solution that could deal with trillions of very small-sized message data or data having a high volume.

It was difficult for us to separate a monolith so that we could separately create, implement, and grow the services. Additionally, the microservices make it possible for QA testers and presentation layer developers to quickly iterate on the user interface, do A/B testing, and confirm the accuracy of the new services.

Migration from EJB to Containers

Enterprise Java Bean (EJB) has so far been one of the main solutions for developing scalable server-side components.

Earlier, every software vendor produced application servers that conformed with the J2EE specification. The following is a list of the top players of J2EE application servers:

It quickly became apparent that there could be no market for reusable server parts that could be utilized elsewhere. The J2EE Specification was designed on Java therefore other programming language workloads were unable to participate, which reduced the footprint of reusable components, to name a few:

- Since the J2EE specification was built on Java, other programming language workloads were unable to participate, thus lowering the footprint of reusable components

- Application servers evolved into a difficult platform for businesses to operate, requiring costly engineering work to keep the platform up and running

- The application server vendor’s own extensions and features created on top of J2EE standards limit the mobility of components

The Force Awakens

The Newgen Engineering Team wanted something more sophisticated than just being able to package and run components inside of a J2EE application server. Specifically, something that is more open and not governed by a small number of Application Server suppliers and can handle a variety of hardware platforms and programming languages.

We investigated and analyzed the Docker platform and found that it satisfied our requirements in terms of providing the answer we sought for the aforementioned issues. On a virtual system with a Docker engine, Docker provides basic ingredients for packaging and running programs written in any programming language. This introduced a new degree of abstraction that the J2EE application server was unable to provide, making it finally viable to create reusable server components.

The need for container management and orchestration was another crucial necessity. Google Open-Sourced Kubernetes was examined for this. It provided Docker containers with Container Orchestration as well as capabilities like load balancing, auto-scaling, container health monitoring, rolling updates, etc.

Choosing the Right Implementation

When an organization decides to switch to a microservices architecture, the biggest challenge in application design and architecture is that communication with functional components should take place smoothly, and it should be able to auto-scale smoothly when the workload changes.

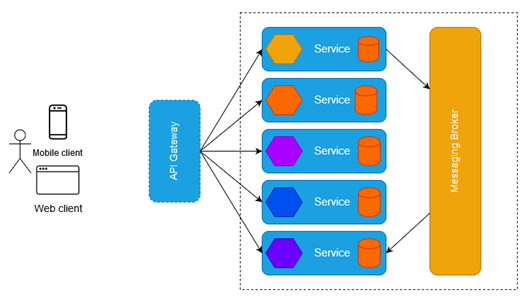

We settled on a system that offered RESTful APIs, exposed the required features, and allowed for API Gateway platform interaction. The Event Hub service was used to make sure that every service could interact with one another, and the API Gateway is another service in charge of linking all the other services to the UI. The orchestration of the container instances was done using Kubernetes.

To provide highly personalized and scalable user interfaces, autonomous and micro user interfaces were also designed for the essential microservices.

Let’s examine the fundamentals that underpin the microservices solution:

- Independent small-size services

- Database per service

- Data exchange between services

- Micro UIs for integration with the core microservices

Lessons Learnt

- Our adventure began with a thorough grasp of the company’s requirements. For defining the limits of microservices, an implementation strategy is required. In our example, we combined the business functions and subfunctions into a single microservice

- In contrast to a monolithic infrastructure, the new architecture has several levels and components. Troubleshooting might take longer than normal, but if the team is comfortable with every facet of the design, it will take much less time to release new features

- In the world of microservices, communication between services poses a significant challenge. For service communication, we used Apache Kafka, an open-source distributed event streaming technology, as opposed to developing a bespoke message broker

- For the foreseeable future, it appears that creating containerized and serverless workloads is the best course of action

- Another crucial step on the road to microservices is monitoring. Microservices require centralized logging and other monitoring tools to keep track of each service’s demand and performance

- Versioning and releasing are quite straightforward with monolithic applications since everything is integrated. On the other hand, with microservices, some services could experience quick changes and call for quick releases, while other services stay largely the same.

- A service instance is deployed within a container, which is often created from an image when using microservices. This indicates that various versions of the service often correlate to distinct versions of container images

- To prevent human mistakes and accelerate this process, managing a service’s version and deployments should be automated

Recommendations

- Begin small – Engineering teams should begin by developing the application’s most basic features. These features may be those that are just somewhat connected to the rest of the product. These features may be tested by developers as they build a continuous integration pipeline. By building the foundational architecture for these and future services, dev teams may achieve the same results. This strategy lessens the risk of mistakes.

- Get rid of dependencies – The services need to be created in a way that makes them autonomous. Monoliths are expensive and sluggish, but microservices have the advantage of quicker delivery. This is due to the speedy development and distribution of several separate services. However, if these services are not entirely autonomous, it might mean that the process must start over from scratch since these services must wait for the monolith.

- Begin with decoupling quick-changing functionalities – Engineering teams should assess various functionalities and prioritize those that undergo the most change. This is due to the fact that these functional blocks are the ones that slow down the monolith the most. If they are separated, it will be simple to make changes without affecting how quickly the program runs.

- Limit Inappropriate decoupling – It is recommended to keep some common services coupled to avoid over-decoupling and prevent cascading failures

- Get your hands dirty – Developers will encounter increasingly difficult features that are dispersed across the program after decoupling initial and simpler operations. It is not advised to just migrate them. Instead, they ought to disassemble these functions and progressively redefine them. Developers must assess these functions and restructure them such that they depend less on the monolith and more on each other

- Take into account rewriting functionalities – Revaluating all functionalities is necessary. It’s important for developers to avoid attempting to move legacy code to the new system. Because legacy code is often developed using out-of-date standards, it cannot be reused. Additionally, this code could have a lot of boilerplate code blocks. There may not be distinct domain ideas and boundaries in this code. Developers can adhere to current best practices for microservices by rewriting certain portions of a program from scratch. Developers should be able to select the coding language or technological stack that is ideal for a certain service

- Adopt a methodical strategy – Monolith to microservice migration is a difficult procedure. To ensure a smooth transition, engineering teams should be ready to take on the responsibility of gradually migrating the applications. Every new iteration will advance enterprises’ progress toward their objective of distributed, agile apps made in accordance with the microservices architecture–thanks to this iterative methodology

- Move in the direction of containers – For the foreseeable future, it appears that creating containerized and serverless workloads is the best course of action

When Should You Say No to Microservices?

When it comes to software architecture, are microservices always the best option? Most likely not.

Not all apps can be divided into microservices because they are not too big and complex. Even if those discrete services contain several subordinate services, a collection of applications made up of small to medium-sized discrete services is most likely already as segmented as it needs to be. Decomposition into microservices would therefore increase the complexity instead of reducing it.

Additionally, if the interdependencies between the services are complicated, it is best to avoid utilizing a microservices design. Furthermore, microservices are solutions for complicated problems; thus, if your company doesn’t deal with complex problems, you should be aware that you lack the infrastructure necessary to manage the complexity of microservices. Applications that depend on real-time data, such as those that use information from Air Traffic Controllers, are a minor illustration of when microservices should not be used.

The Final Word!

Software application architecture has seen a transition from monolith to microservices. It is safe to say that if used properly, microservices can prove to be a blessing.

It’s a transformation that includes adjustments to the deployment and maintenance procedures in addition to modifications to the architecture and design. Along with the architectural modifications to divide services into tiny, manageable components, it demands we adopt automated deployment, monitoring, and scaling. Additionally, the migration to the cloud is facilitated and optimized by microservices. Microservices go hand in hand with deployment automation and containerization.

In a nutshell, it is not a quick answer for any performance or scalability problems. It’s a shift in perspective where we must consider the entire picture, from design through deployment and maintenance.

You might be interested in